Cyber Propaganda - What Ads Are Being Pushed to You?

This post was created back in 2016 - it is still relevant now

HEAD FUCKCYBER PROPAGANDA

The (not so) recent twitter bot wars between Rep. and Dem. campaigns in the United States elections in 2016 and during presidential debates is just one of the recent examples that big government, companies and all kinds of special interests take social media very, very seriously. Hardly news, of course.

What has however, become increasingly apparent in recent months is the large scale industrialisation of the creation of social content by automated means and by activists utilising technology to organise themselves. Meme factories of course being one obvious example.

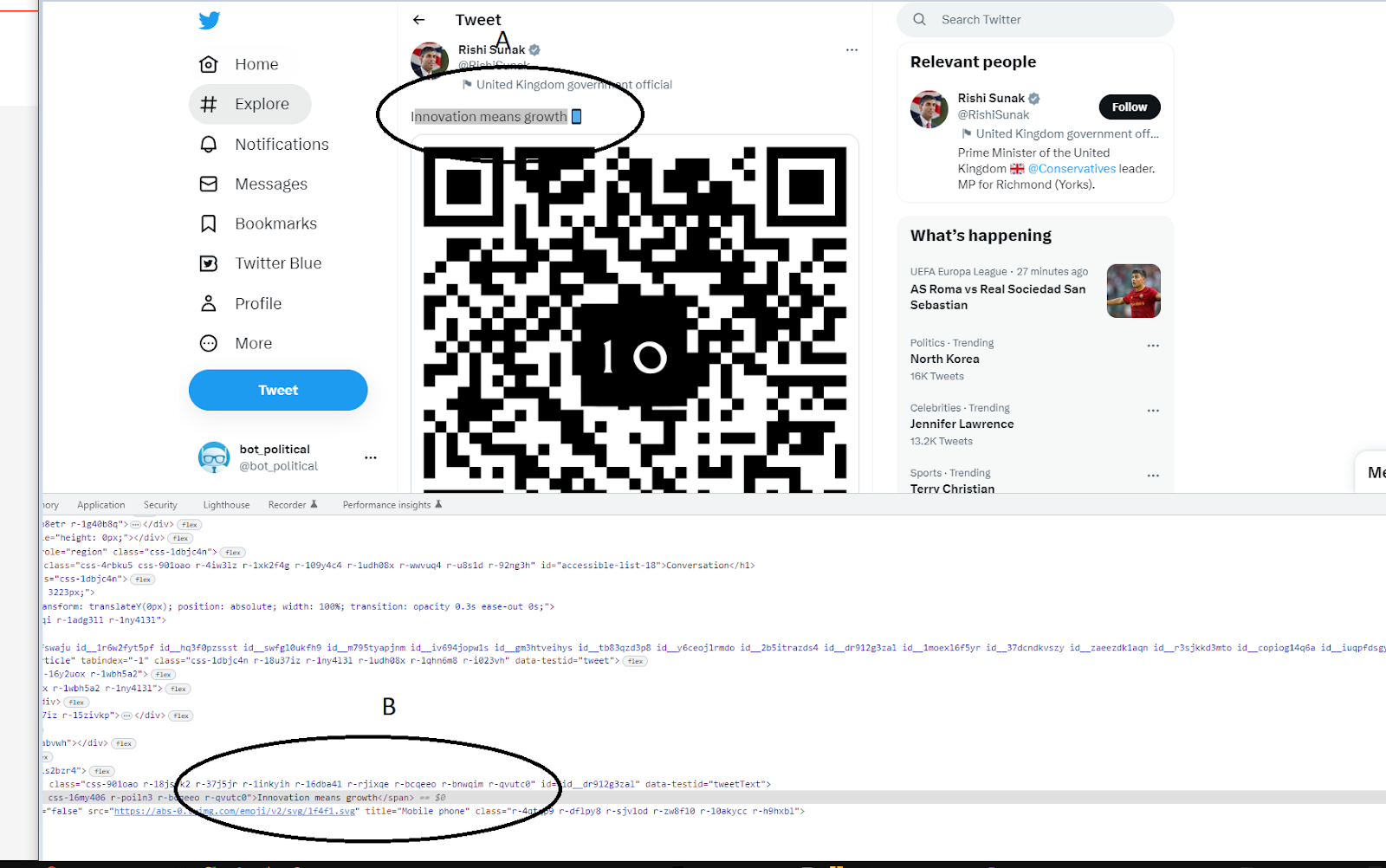

In the future it is all but inevitable that (in order to, for example, maintain the image of public consensus or the image of two warring opposite sides with no common ground even though the reality may be different) bots purporting to support ideologies, candidates, parties, and even corporations will become commonplace. In addition, (although the technology is probably not there at present) it may even become possible to have interactive bots simulating human beings that state support for these things. One could imagine technology capable of making bots that can simulate short answers in newspaper website or YouTube comment sections and which have some measure of interaction that are already in existence although I doubt that current technology allows for much subtlety let alone a pass of something like the Turing Test (side note : not actually an intelligence test and more of a thought experiment). I hope I am wrong. It is a significant weakness of humans in that we don't routinely perform the Turing test ourselves on apparent people on social media. It's sometimes easy to spot the unnatural - look for extremely large follower counts, prolific posting/tweeting history, significant amounts of re-tweets to name but a few of many things and patterns we do know. The rest, sadly, still elude us. Oh yes, there may even be Cyborg Twitter bots. Real people that have relinquished occasional control of their social media account to a bot or sock puppet accounts that are controlled by both human and automated means. See many auto re-tweets much? Open your eyes and you will see.

Other new phenomena include the use of techniques such as "AstroTurfing". For the uninitiated AstroTurfing is the (sometimes online) simulation of grassroots support. It has been used to moderately successful effect in America, with the supposedly grassroots Tea Party movement (in large part sponsored by the Koch brothers) and arguably to great effect in the recent EU Referendum in the United Kingdom by the Leave.Eu campaign (N.B. distinct from the, "official", Vote Leave campaign) - in this case it catalyzed pre-existing public opinion about a variety of concerns, set up a common political enemy and organised people to act in co-ordination. Nothing wrong with that in and of itself : that is politics. An ugly part but a part nonetheless.

AstroTurfing may include the use of bots or may employ the use of sock-puppet accounts controlled by human beings, in turn employed by special interests. This means end aims are not necessarily publicised to the voters/activists mobilised. I will keep this neutral and say that does not necessarily mean voting either way is wrong - only that this is part of what AstroTurfing is.Just the above mentioned technologies could result in an new type of asymmetrical situation where human beings are subjugated in large numbers by intimidation from relatively small groups in control of particularly specialised technology - note that this is not necessarily dependant on wealth or power though likely is at this present time. Consequently, under subjugation, they do not wish to be seen to be speaking out against "accepted" ideas or policies in the normal human social space offline. This would particularly be the case where debate has become polarised and online speech (by both bots and the most polarised humans) has become physically threatening and abusive.

There's nothing new in the way that people are subjugated/manipulated/ruled/used here - it may have just become more efficient.

Part two : Arguments that start online and finish in the street

The bots or activists could even be purposed to act in any way as any type of character to make an opinion about a situation as presented by the news/political party/person seem legitimate. Some humans could conceivably live in states of complete unreality when it comes to current affairs and the devastating effects on the mental health of vulnerable people might be only one of the more obvious consequences of this.

Even worse: arguments started online could end up in physical violence on the street with discussion on these issues having already escalated past the point at which civilised discourse is an option - for example as seen in the recent threats of violence and rape (particularly aimed at women) around women in video games or where Donald Trump singles out particular people on Twitter to rail against and his followers (be they bot or human) then persecute and threaten that person. These are things that happen. What is unclear is the role of artificial intelligence in all this. Certainly the training data sets are available to push content to users based on expressed preferences on social media. How hard would it be to simulate a person based on collected data? A supposedly real online persona, that actually doesn't exist. Not straightforward but not totally out of reach either. At this point in time, the technology is yet immature and people have a chance to learn about it, and with that knowledge comes the armour to render it less effective in subjugation.

Much of social media data are publicly available - in particular Facebook data where people have "liked" certain things could be used to train an AI to categorise people into groups and serve content accordingly. In combination with psychometric testing (the Stanford University study described here does just that.

wired.com

Wired Stanford Study Article

The danger here is obviously that narratives could be pushed onto people in such a way as to be as manipulative as possible while organising them into coordinated groups. For example imagine content served according to people's personalities by algorithm. Person a dislikes y - content served stating y will no longer be a problem with poltical candidate 1. Person b dislikes x - content served stating x will no longer be a problem with political candidate 1 and so on. Now I ask you the question - what if the solution to solving x and the solution to solving y conflict? For example say x is affordable housing and y is support for policies that enable landlords to charge whatever they want - obviously those two things are fundamentally conflicted but if content is targeted it is conceivable that person x and person y could vote for the same candidate. Given current form in all likelihood said candidate if elected would then address problem z (ignoring problems x and y) which is how to store large sums of their money without being taxed.

It is a simplified example but if there's one thing I have learnt is to never underestimate the stupidity and gullibility of human beings. We really are not as smart as we think we are, at least not on a consistent basis. Machines, if noting else, are always consistent - can we guarantee we are always on our guard in the same way?

To stretch this hypothetical situation to the limit, imagine if bots supporting one ideology successfully provoked a group of people with a philosophically diametrically opposed ideology, moving them to actual violence.

Part three : The Obfuscation of Common Ground and Viral Abuse

The issues with polarisation are obvious - even when one has "won" an argument it seems as if it is more about winning and then the winner gets to define the truth, in all matters, rather than the truth being reached in the particular matter being debated. The phenomenon of echo chambers and triggering illustrates this well. People are labelled "right wing" or "left wing" according to a set of criteria. e.g. left is the set of people displaying any single one of following characteristics - actionable sympathy for refugees, socialism etc. while right has emphasis on security, small government etc. The fact that individuals may have a mixture of opinions on the criteria is rendered irrelevant in many online discussions because that is the nature of polarisation. And hence one way is shown that may lead to truth claimed in one matter means truth is wrongly claimed in all matters for polarised debaters. Essentially, by winning an online argument, you'll often see people wax lyrical about all the other things that they think are wrong. Based on their one perceived "owning" of another person online. In addition to this is the very real phenomenon of the filter bubble on social media where content is served based on prior preferences (by default that is - there are examples where this can be gotten around in settings) meaning that opinions are reinforced by the results returned or content served - further polarising the user.

Take as an example the fact that one of the things that binds the vast majority of people on the planet is that we are almost all victims of unequal wealth distribution. And on this common ground we sacrifice grounding logic to the God of Short-Term Trolling Gratification. We don't discuss this most obvious of inequities.

Where large countries or communities are ostensibly split in opinion e.g. The EU Referendum in the UK or the recent 2016 US General and Presidential elections then it is hard to deny that online discourse exists in polarised camps on most popular fora for discussion. As well as the aforementioned bot issues, there exist issues of an almost complete lack of courtesy online. I imagine there are many reasons this could be so but my personal experience is that when people think they can avoid consequences their behaviour is often not as good as it could be. Courtesy is an essential part of discussion - it isn't being a pussy to expect courtesy when discussing important things. This is so clear in the post truth online discourse "Who cares about [controversial topic 1 opinion A] I have [controversial topic 1 opinion B]? It is well known that those people with [controversial topic 1 opinion A] are pussy snowflakes so there! There are also only two possible opinions and anyone who tries to make friends with us without agreeing with [controversial topic 1 opinion B] is an enemy.". This rude approach to matters simply does not work very well even when it is subjectively or objectively truthful because its lack of courtesy creates enemies needlessly. Truth is again obscured. What if one is an "enemy" on one controversial topic and a "friend" on another? Hopelessly fractured relationships would start with such extreme words said between relative strangers.

This exists on both the remain and leave sides in the EU referendum, and on the pro-Trump/anti-Trump sides in the US Election as far as I can see.

Other examples that are more benign can be seen on YouTube daily. The comments come as a result of something banal like Sony vs Microsoft, or reviews of games or films. Comments are almost pointless in this scenario since when someone questions another's parentage based on opinion of a video game then what sensible discussion can be had?

Trolling can also be done for amusement of course.

However, these dialectic arguments are surely ones where people need to be courteous if their intention is actually to have a discussion about what is in reality a very luxurious leisure pursuit. They are only discussions of opinions after all. Again, imagine abuse bots programmed by the large corporations who sell these products to consolidate their fan bases - giving people the illusion they might be part of some exclusive tribe and where particular forms of abuse have become almost viral to help "Grow their Brand".

Part four : Divide and Conquer - the Magnetic Field of Human Tribalism

To incumbent wielders of power both economical and political it is absolutely in their interest that the people from whom their power derives argue amongst themselves particularly as education is still nearly universal in developed countries and good proportions of people go on to be University educated. It may not stay like this and I'm really worried about it - what's the aim of not educating a population unless you plan to subjugate them in some way? In my opinion this is a way to treat them as Human 2.0 - a consumer, uninterested and uneducated and extremely unlikely to perceive the inequity to which they are subject or if they can perceive it will be relatively easy to convincingly present scapegoats to them.

As an example take Brexit - the areas which voted to leave against the wishes of the at-the-time establishment tended to be from poorer areas and less educated areas. This doesn't mean that their vote was necessarily wrong but the picture put forward online and mainstream media from the multiple leave campaigns was where all the things they saw as bad; lack of housing, lack of investment, lack of public services and healthcare were presented to them as a result of being under the power of the many-tentacled European Union when it could be argued that they are a result of local UK policy - will those policies change in the event we leave the EU for example? Then on the other side is characterisation of leave voters as racist, ignorant, uneducated (because of the pattern spotted above) or in league with capitalist extremists. The online conversation is so different to the conversations in real life - at least in my experience.

I think most people would agree discussion of these things online is difficult - because it is so polarised. This is not to say what I am saying about Brexit is true - but that discussing anything to do with it is polarised. How many of the discussions you see online are real people saying things? Are people really so polarised? Do people use sock puppet accounts to make it seem like there are more people with polarised opinions, or does the lack of real consequences allow people to vent? It's simple psychology that people gravitate towards groups; we are sociable animals. That goes even for those of us who aren't particularly gregarious, myself included. If those polarised groups are presented online to them - and people gravitate towards one or the other, then does this lessen the chance for co-operation amongst real life people?

In these situations journalistic integrity becomes extremely important as does awareness of the current capabilities of technology. I saw a protest sign on a street march against (I think) SOPA, the [insert actually what it is as I'm not sure right now but doesn't matter for my point]controversial internet copyright law in the states which said "It's no longer OK to not know how the internet works Fuck SOPA" (I'm paraphrasing here but it was something like that because I want you to see how jarring it is when i don't confirm mu source and rely on my memory as I write this) and that struck me as very true.

This is a case of the humanities and computer science mixing so perhaps in future it would be good to have tech news be less about the latest iteration of the same product, effectively acting as free advertisement for tech industry companies, and more about the societal impact. On a typical mainstream news website the tech section may mention the odd sociological impact of technology but is often more focussed on tech failures like Samsung Note 7 exploding or the aforementioned latest iterations of tech.

Cyber warfare potentially affecting outcome of US election - that does not matter latest Samsung phone leak shows a thin top bezel...for fuck's sake; In the Tech Section or Tech is in the News Section?

Part five : The Netizens Fight Back

Without claiming authority to define what a wise Netizen is or what they should do - one might be to take with a large pinch of salt any anonymous post. If you do wish to take controversial anonymous posts to represent real opinion then there are at least a couple of things to think about. One is - is it anonymous because there are laws chilling free speech? Another is - is it anonymous because people just want to vent and show the bad parts of their psyche online? Yet another is -is it anonymous because the opinion is so leftfield that people do not want to be associated with that opinion in real-life? In other words societal reasons for chilling free speech rather than laws. And I use free speech here not in the legal sense where speech is protected but in the general sense rather than the legal sense talk about it - if there is such a thing.

Another might be to stick to getting info from sites which require a reasonable amount of identity verification before people can discuss things or choose sites which are more closely moderated. Obviously the former is only an option in the more advanced countries because of the aforementioned free speech issues.

A good rule is also if you are going to say something online that would result in you being punched in the face in real life then maybe do not say that in the way you are going to say it because you are probably just being a cunt. If you have a point, make it, but try to do it in a way that communicates the point without the baggage. Don't blame other people for being offended because you are incapable of framing ideas without insulting/threatening/degrading or dehumanising others. Surely any civilised idea can be communicated in such a way? If it cannot, then maybe it is a bad idea and is a manifestation of primal hatred and evil. Surely no idea is so bad as that.

Outside of the bots are the organised groups which also act to polarise opinion and see free speech as the ability to spout vitriol, create conflict and divide communities.

That is really another discussion. It is related in that these groups might use technology or the naivete and biochemistry of the of the young and adolescent brain the technical ignorance of the older Silver Surfers to spread their messages though.

Although there are other solutions I'm sure perhaps an important one might be to approach other people in real life with courtesy and an open mind and heart. If they turn out to be arseholes then sure, robustly defend yourself. I bet that the overwhelmingly vast majority of people are not arseholes, just want to get along and get on and try not to get ripped off as they go about their lives. In having such heated abusive discussions online it seems to me it might be easy to lose the truth of the more Earthly world of interactions where there are subtleties we aren't capable of putting into forms of expression that we might use online. Of course, on a darker note, it may be that people are simply scared to speak their truth in the physical world and they really are hateful on the inside. Maybe the truth is somewhere in-between but I hope it's nearer to the former than to the latter.

This is the playground of the human mind. People have felt free to inhabit cyberspace since it was invented. There are no rules - but there are already natural laws. Heated discussions can turn into violence same as anywhere else - is it better to practice non-violence and courtesy online until we know what's up? I also believe that survival of the fittest is a natural law but as humans we don't have to let people die and it seems fitting that we can use this technology to help each other proving our fitness by cooperation to help ourselves adjust to this new reality rather than through domination and warfare. Given risks involved in not doing this -such as turning cyberspace into a super efficient arena for a new type of warfare - using the new found ability for networking and co-operation to create and then conflict with enemies rather than all the amazing things it can really do to bring people together to solve the GLOBAL problems that we face not just as a species, but as inhabitants of the planet Earth (among many other beings and lifeforms) and to make more leisure time for humans in a less harmful way than we currently do.

Finally: If you doubt the real threat, as opposed to theoretical, that the manipulation of new technologies poses with respect to propaganda, suppression of debate and election gaming ask yourself this question: if it works would already powerful people, if aware of its efficacy, use it? I think we all know the answer to that. Does the internet offer novel tools and scope for dissemination of propaganda, and not only that, full on psychological operations and experiments on the public? Probably. Since it does, it follows that this is likely to be happening.